HBM3 has everything in place, only the standard is finalized

HBM3

From the PC era to the mobile and AI era, the architecture of the chip has also moved from being CPU-centric to data-centric. The test brought by AI includes not only chip computing power, but also memory bandwidth. Even though the DDR and GDDR rates are relatively high, many AI algorithms and neural networks have repeatedly encountered memory bandwidth limitations. HBM, which focuses on large bandwidth, has become the preferred DRAM for high-performance chips such as data centers and HPC. plan.

At the moment, JEDEC has not yet given the final draft of the HBM3 standard, but the IP vendors participating in the standard formulation work have already made preparations. Not long ago, Rambus was the first to announce a memory subsystem that supports HBM3. Recently, Synopsys also announced the industry's first complete HBM3 IP and verification solution.

IP vendors go first

In June of this year, Taiwan Creative Electronics released an AI/HPC/network platform based on TSMC’s CoWoS technology, equipped with an HBM3 controller and PHY IP, with an I/O speed of up to 7.2Gbps. Creative Electronics is also applying for an interposer wiring patent, which supports zigzag wiring at any angle, and can split the HBM3 IP into two SoCs for use.

The complete HBM3 IP solution announced by Synopsys provides a controller, PHY and verification IP for a 2.5D multi-chip package system, claiming that designers can use memory with low power consumption and greater bandwidth in SoC. Synopsys’ DesignWare HBM3 controller and PHY IP are based on the chip-proven HBM2E IP, while the HBM3 PHY IP is based on the 5nm process. The rate per pin can reach 7200 Mbps, and the memory bandwidth can be increased to 921GB/s.

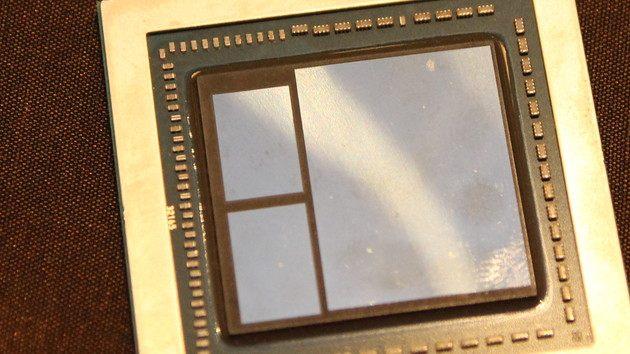

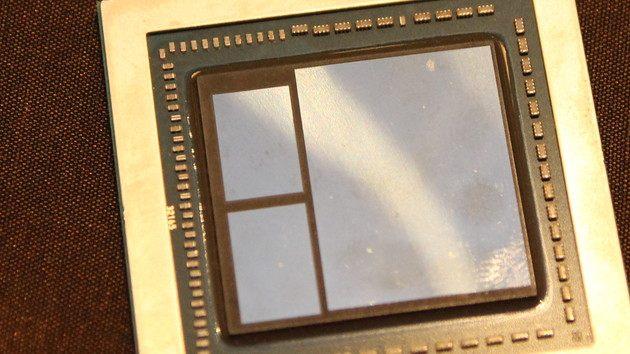

Package bonus

The above is only the data of single-layer HBM. After stacking 2 or 4 layers through 2.5D packaging, the memory bandwidth will be doubled. Take Nvidia’s A100 accelerator as an example. Nvidia’s first 80GB version uses a 4-layer HBM2 to achieve a bandwidth of 1.6TB/s. After that, a 5-layer HBM2E version was introduced to further increase the bandwidth to 2TB/s. And this kind of bandwidth performance can be achieved with only 2 layers of HBM3, and the configuration of the 4th and 5th layers is the existing memory specification on the far supermarket.

In addition, the logic + HBM method is not new. Many GPU and server chips have adopted similar designs. However, as fabs continue to make efforts in 2.5D packaging technology, the number of HBMs on a single chip is also increasing. For example, the TSMC CoWoS technology mentioned above can integrate more than 4 HBMs in the SoC chip. Nvidia's P100 integrates 4 HBM2s, and NEC's Sx-Aurora vector processor integrates 6 HBM2s.

Samsung is also developing the next-generation I-Cube 2.5D packaging technology. In addition to supporting the integration of 4 to 6 HBMs, it is also developing an I-Cube 8 solution with two logic chips + 8 HBMs. Similar 2.5D packaging technology and Intel's EMIB, but HBM is mainly used in its Agilex FPGA.

Concluding remarks

At present, Micron, Samsung, SK Hynix and other memory manufacturers are already following up this new DRAM standard. SoC designer Socionext has cooperated with Synopsys to introduce HBM3 in its multi-chip design, in addition to the x86 architecture that must be supported. , Arm’s Neoverse N2 platform has also planned to support HBM3, and SiFive’s RISC-V SoC has also added HBM3 IP. But even if JEDEC is not "stuck" and released the official HBM3 standard at the end of the year, we may have to wait until the second half of 2022 to see HBM3 related products available.

Everyone has seen HBM2/2E on many high-performance chips, especially data center applications, such as NVIDIA’s Tesla P100/V100, AMD’s Radeon Instinct MI25, Intel’s Nervana neural network processor, and Google’s TPU v2 and many more.

Consumer-level applications seem to be drifting away from HBM. In the past, AMD’s Radeon RxVega64/Vega 56 and Intel’s KabyLake-G used HBM’s graphics products, and even higher levels include Nvidia’s Quaddro GP100/GV100 and AMD. Professional graphics GPU like Radeon Pro WX.

Today these products all use GDDR DRAM. After all, there is no bandwidth bottleneck in consumer applications. On the contrary, the speed and cost are the most valued by chip manufacturers. However, HBM3 mentions the advantages of larger bandwidth and higher efficiency. Did not reduce costs.